Bad product data costs you money before you know the error even exists. When a distributor lists a hydraulic pump with the wrong thread size, or fails to include a high-resolution image, the result is rarely just a support ticket. It becomes a return, a lost customer, and a hit to your margins.

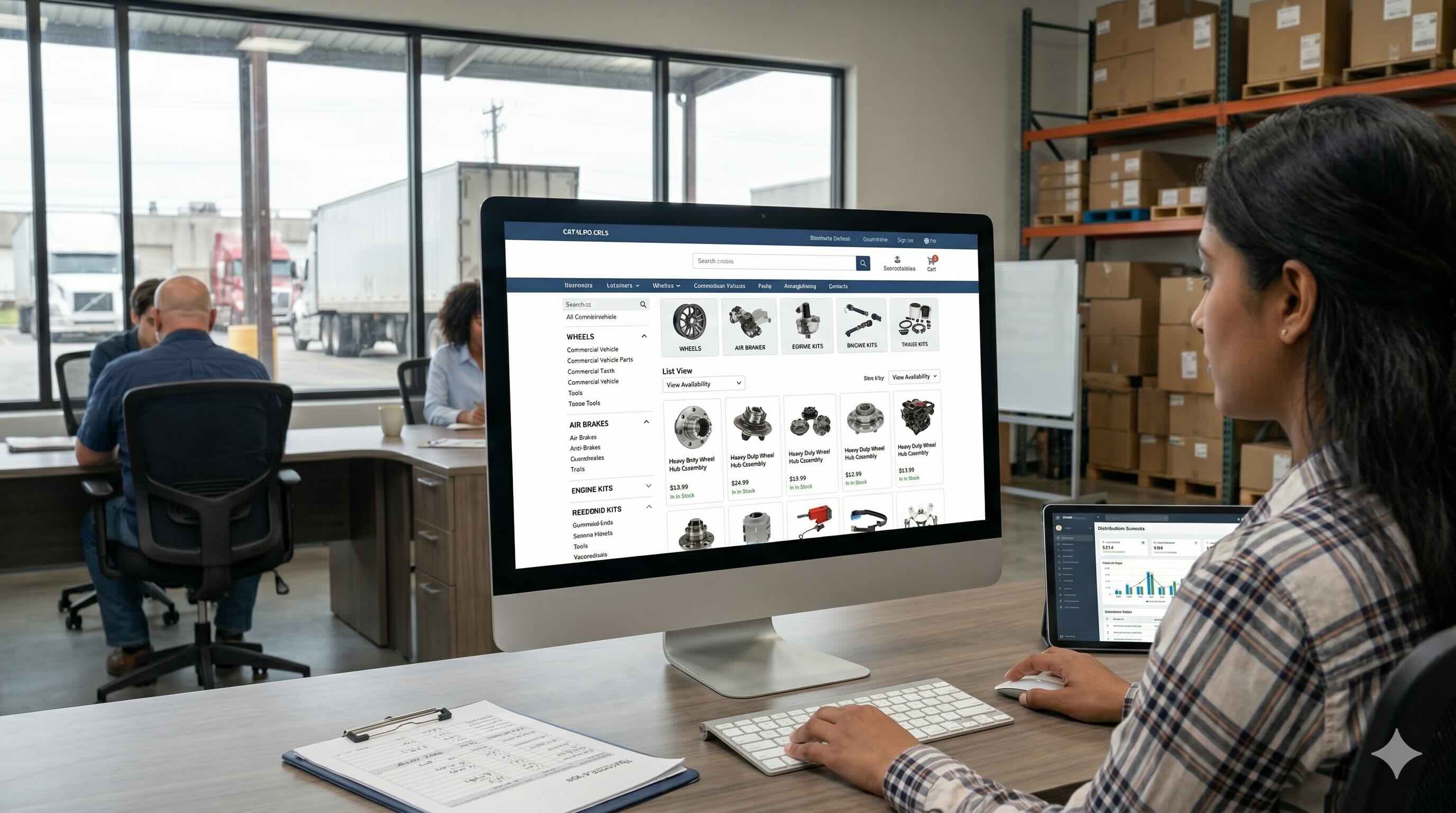

Keeping your catalog organized is table stakes. The job now is building a system that enriches and syndicates product data faster than your competitors so you can increase your e-commerce sales.

TL;DR

- Fix your rules before buying software: Don't just dump data into a new PIM system. You need a clear plan for who is in charge of checking and approving information so your digital catalog doesn't become a "disorganized swamp."

- Automate and standardize: Instead of typing everything by hand, use tools that automatically fix "messy" data from different suppliers.

- Build a "Golden Record": Keep one perfect, highly detailed version of your product data internally. This makes it easy to quickly tweak and send the right information to different websites whenever their rules change.

Establish data governance first

Most product data or PIM initiatives fail before they start. Not because of the software, but because there’s no clear definition of who owns the data, who validates it, or who can change it.

That’s why governance comes first. It’s best practice to build a Data Quality Management System before you import a single record. Think of it like quality assurance in manufacturing: routine audits, defined roles, and inspection procedures that catch errors before they compound.

In practice: pull 50 items from your warehouse each month and check that what's in the PIM matches the physical product. Dimensions, weights, specs. One transposed decimal ("0.5 lbs" entered as "5.0 lbs") triggers a shipping adjustment. Multiply that across thousands of SKUs and the cost adds up fast.

The GS1 Data Quality Framework notes that high-quality data requires a management system with inspection procedures and clearly defined roles. The "everyone updates everything" model of a shared spreadsheet has to go.

Define data stewardship roles

Assign specific people to specific segments of the catalog. A product manager might own technical specifications for the pneumatics category while a marketing lead owns commercial descriptions. Clear ownership prevents incomplete records from sitting in limbo with nobody accountable for them.

Implement validation gates

Don't let incomplete data into your active catalog. Best-practice governance means setting up gates that a record has to clear before getting published.

- Ingestion gate: Does the record have a primary identifier (SKU/GTIN) and a base description?

- Enrichment gate: Are all required attributes for this category filled in? (Voltage, material, dimensions, etc.)

- Commercial gate: Are high-resolution images and marketing copy attached?

Enforcing these stages keeps half-finished listings off your sales channels.

Automate ingestion and normalization

Traditional PIM workflows run on manual entry. A vendor sends a PDF or a spreadsheet, and someone on your team types that data into the system by hand. Manual entry creates bottlenecks and compounds errors as catalog volume grows.

Modern best practice shifts from manual entry to automated ingestion. The challenge is that manufacturers describe the same things differently. One supplier uses "Length," another uses "L." One uses "Crimson," another uses "Red."

Normalization rules fix this. As data comes in, your system maps incoming attributes to a standardized internal taxonomy. When a customer filters for "Red," they see every relevant item regardless of how the original supplier labeled it.

As catalog complexity grows, this automation isn't a nice-to-have. It's the only way to keep up.

Forrester notes that PIM vendors are now expected to assemble data from both supplier and enterprise sources to keep up with the complexity of digital shelves.

Engineer for channel volatility

A lot of distributors build their PIM to feed their own website and stop there. The modern requirement is syndication: pushing data to Amazon Business, Grainger, Google Shopping, and specialized industry marketplaces.

The core challenge is channel volatility. Third-party endpoints change their data requirements frequently and without much warning. Google Merchant Center enforces strict validation rules on product attributes. If Google updates its Products Data Specification to require a new attribute for "unit pricing measure," your existing feed starts throwing errors and your ads may stop running.

The way to manage this is to decouple your internal master data from your channel outputs. Store the richest possible version of your product data internally, then use transformation layers to map that data to the specific, shifting requirements of each channel.

- Maintain a "Golden Record" as your single source of truth.

- Use channel-specific templates that update independently of your core data.

- Monitor feed health daily to catch rejection errors before they stack up.

The best teams treat a feed error as a routine content task, not an IT ticket. They build automated error reporting that flags rejected SKUs directly to the data steward for that category, skipping the need for technical intervention. That speed matters because listing downtime on major marketplaces costs you revenue rank that can take weeks to recover.

Adopt standard identifiers

Proprietary part numbers work inside your ERP. Outside of it, they isolate your products from search engines, procurement systems, and trading partners.

GTINs and global standards

The Global Trade Item Number (GTIN) is how the internet looks up products. Search engines use GTINs to confirm that the item you're selling is the exact item a user is searching for. Schema.org uses GTINs to structure product data for rich results in search.

Beyond discovery, standards make data exchange possible. The GS1 Global Data Synchronization Network (GDSN) allows for continuous synchronization of standardized product data between trading partners. Ignore these standards and you force your customers to manually reconcile your catalog against their own systems.

Technical classification standards

For technical industries, classification standards like ETIM or UNSPSC define which attributes matter for a specific product class. ETIM International recently released ETIM xChange, a JSON-based standard for exchanging product master data. If a large contractor can import your catalog directly into their procurement software because you followed the ETIM standard, you have a real competitive advantage over a distributor still sending flat PDFs.

Prepare for the Digital Product Passport

Data quality is no longer purely a commercial concern. It's becoming a regulatory one. The EU's Ecodesign for Sustainable Products Regulation requires Digital Product Passports (DPP) to track sustainability information across the supply chain. This affects any distributor selling into the EU market or working with global manufacturers who standardize their packaging for compliance.

These regulations push you toward data models that can support granular sourcing, repairability, and carbon footprint attributes. Best practice now means structuring your PIM to handle provenance data: where a product came from and how it can be recycled.

This coincides with GS1's "Sunrise 2027" initiative, which prepares for the transition to 2D barcodes at the point of sale. According to GS1 US, these web-enabled 2D barcodes will connect consumers directly to dynamic product information including sustainability data, allergens, and sourcing details. Distributors who build their PIM architecture now to support these extended attribute sets will avoid a frantic retrofit when these standards become mandatory.

Build trust mechanics for AI enrichment

AI has changed how teams approach product data. You can now use it to scrape manufacturer sites, parse PDF spec sheets, and generate marketing descriptions in seconds. At scale, it's the only realistic way to solve the empty box problem.

But trusting AI without checks is a risk. A best-practice approach to AI requires strict trust mechanics.

- Provenance tracking: Log exactly where each data point came from. Was it entered by a human? Scraped from a specific URL? Generated by a model?

- Confidence scoring: AI suggestions should carry a confidence score. High-confidence matches can be auto-approved; low-confidence suggestions route to a human for review.

- Audit trails: Every change to a record needs to be immutable and logged. If a product description changes, you need to know who or what changed it, and when.

Human-in-the-loop workflows let you get the speed of AI without giving up data integrity. Research on responsible GenAI adoption consistently points to defined responsibility and leadership oversight as the critical factors when deploying these tools in production.

The shift from management to growth

The definition of product information management is changing. It's moving from a defensive operation of fixing errors and organizing files to an offensive strategy focused on revenue. When product data is accurate, enriched, and standardized, it fuels advanced sales functions. It powers recommendation engines. It lets sales reps answer technical questions without hunting for a spec sheet. It lets customers easily filter 50,000 SKUs down to the exact three they need.

Organizations that solve the data problem find revenue that was previously invisible. Proton treats this as a data acquisition challenge, not just a storage one, using AI agents to actively collect and verify product details from across the web. When you treat product data as a strategic asset rather than a filing project, you build a foundation that scales.

%20(11).png)